Robots are no longer limited to factory floors. They are increasingly used in healthcare, homes, and everyday environments to assist people with a wide range of tasks. However, programming robots is still difficult. Most robotic systems require expert knowledge and complex coding, which makes them hard to use for non-experts. This creates a major barrier for wider adoption.

To overcome this challenge, researchers developed a method called Learning from Demonstration (LfD). Instead of writing code, users teach robots new skills by showing them how to perform a task. This makes robot programming more intuitive and helps make robotics accessible to a broader range of users.

Limitations of Traditional Approaches

Traditional robot programming requires users to carefully define every action step using code. This process is time-consuming and requires specialized expertise.

Motion planning methods reduce the need to specify exact trajectories, but they still require precise instructions such as goal positions and waypoints. These programs are often rigid and need to be rewritten when the environment changes.

Reinforcement learning offers more flexibility, but it introduces its own challenges. Designing a suitable reward function usually requires deep domain knowledge, and training often takes a long time. This makes reinforcement learning difficult to apply in real-world settings.

Because of these limitations, Learning from Demonstration becomes especially attractive—particularly when a task is hard to describe using rules or rewards, or when manual programming is impractical.

Ways Humans Can Demonstrate Tasks

There are several ways users can demonstrate tasks to a robot, depending on how they interact with the system.

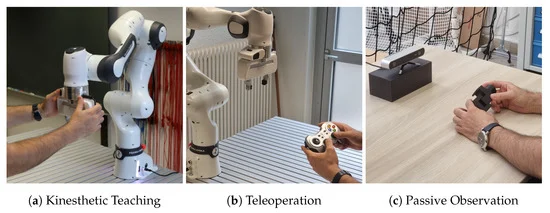

Kinesthetic Teaching

Kinesthetic teaching allows users to physically guide the robot by moving its joints directly. This method does not require extra sensors or equipment, making it simple and intuitive.

It is particularly well suited for robotic manipulators such as the KUKA iiwa (7-DoF) and Franka Emika (7-DoF) robots, where users can easily demonstrate precise motions by hand.

Teleoperation

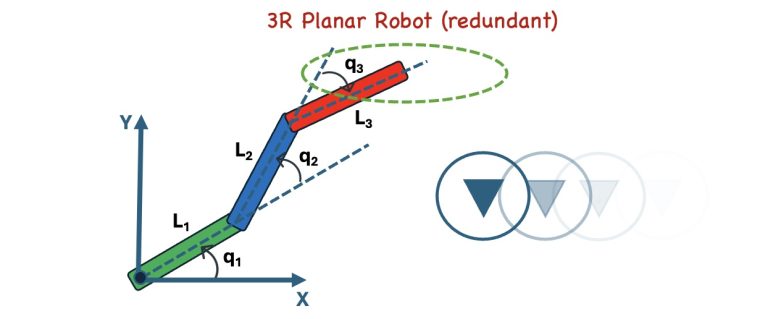

In teleoperation, users control the robot using a joystick or remote controller. Typically, the user controls only the robot’s end-effector, while the robot computes the joint movements using inverse kinematics.

This approach is useful for robots with many joints, such as humanoid or highly redundant robots, where controlling each joint directly would be overwhelming. However, computing inverse kinematics for high-degree-of-freedom robots is mathematically challenging.

Passive Observation

In passive observation, users perform a task naturally without directly interacting with the robot. Human motion is captured using tools such as 3D cameras or motion-capture systems.

This method is easy for humans, but difficult for robots. Extracting meaningful task information from raw human motion data and transferring it to a robot is a complex problem.

Key Goals of Learning from Demonstration

A central goal of LfD is to enable robots to learn tasks quickly and reliably from only a small number of demonstrations. Achieving this requires improvement in two main areas:

- Learning algorithms – how effectively the robot can learn from the data

- Demonstration quality – how informative and well-structured the human demonstrations are

Improving both aspects is essential for building robotic systems that are easy to teach, adaptable, and practical for real-world use.